HybridCache in ASP.NET Core - .NET 9

Sponsored

- Tired of outdated API documentation holding your team back? Postman simplifies your life by automatically syncing documentation with your API updates

- no more static docs, no more guesswork! Read more.

Many thanks to the sponsors who make it possible for this newsletter to be free for readers.

Want to reach thousands of .NET developers? Sponsor TheCodeMan →

The background

Caching is a mechanism to store frequently used data in a temporary storage layer so that future requests for the same data can be served faster, reducing the need for repetitive data fetching or computation. ASP.NET Core provides multiple types of caching solutions that can be tailored to your application's needs.

Two most used are:

- InMemory Cache

- Distributed Cache

In-memory caching stores data directly in the server's memory, making it fast and easy to implement using ASP.NET Core's IMemoryCache. It's ideal for single-server applications or scenarios where cached data doesn't need to persist across restarts. While highly performant, it's unsuitable for distributed environments as the data is not shared between servers.

Distributed caching stores data in a centralized external service like Redis or SQL Server, making it accessible across multiple servers. ASP.NET Core supports this via IDistributedCache, ensuring data consistency and persistence even in load-balanced environments. Though slightly slower due to network calls, it's ideal for scalable, cloud-based applications.

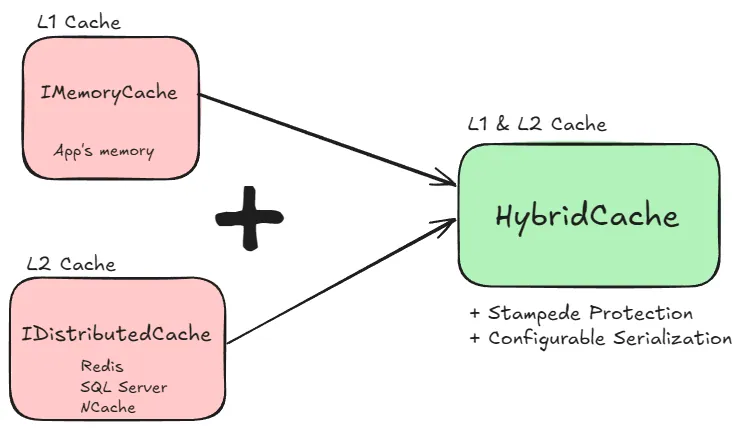

.NET 9 introduces HybridCache, a caching mechanism that combines the speed of in-memory caching with the scalability of distributed caching. This dual-layer approach enhances application performance and scalability by leveraging both local and distributed storage.

Let's see why is this interesting feature and how to implement it.

What is HybridCache?

The HybridCache API bridges some gaps in the IDistributedCache and IMemoryCache APIs.

HybridCache is an abstract class with a default implementation that handles most aspects of saving to cache and retrieving from cache.

In addition, the HybridCache also includes some important features relevant to the caching process. Those are:

Two-Level Caching (L1/L2): Utilizes a fast in-memory cache (L1) for quick data retrieval and a distributed cache (L2) for data consistency across multiple application instances.

Stampede Protection: Prevents multiple concurrent requests from overwhelming the cache by ensuring that only one request fetches the data while others wait, reducing unnecessary load.

Tag-Based Invalidation: Enables grouping of cache entries with tags, facilitating efficient invalidation of related cache items simultaneously.

Configurable Serialization: Allows customization of data serialization methods, supporting various formats like JSON, Protobuf, or XML, to suit specific application needs.

Let's see how to implement it.

How to implement HybridCache in .NET 9?

To integrate HybridCache into an ASP.NET Core application:

- Install the NuGet Package:

dotnet add package Microsoft.Extensions.Caching.Hybrid --version "9.0.0-preview.7.24406.2"

- Register the Service:

builder.Services.AddHybridCache(options =>{ options.MaximumPayloadBytes = 1024 * 1024; // 1 MB options.MaximumKeyLength = 512; options.DefaultEntryOptions = new HybridCacheEntryOptions { Expiration = TimeSpan.FromMinutes(30), LocalCacheExpiration = TimeSpan.FromMinutes(30) };});

The following properties of HybridCacheOptions let you configure limits that apply to all cache entries:

MaximumPayloadBytes - Maximum size of a cache entry. Default value is 1 MB. Attempts to store values over this size are logged, and the value isn't stored in cache.

MaximumKeyLength - Maximum length of a cache key. Default value is 1024 characters. Attempts to store values over this size are logged, and the value isn't stored in cache.

3. Configure Distributed Cache (Optional): To utilize a distributed cache like Redis:

builder.Services.AddStackExchangeRedisCache(options =>{ options.Configuration = "connectionString";});

This is optional considering that HybridCache can function only as InMemory Cache. And now you are able to use it.

How to use HybridCache?

Scenario: Product API with Caching

We have an API that provides product information. Frequently accessed data will be cached for improved performance:

1. L1 (In-memory): To serve fast reads from local cache. 2. L2 (Redis): To ensure data consistency across distributed instances.

public class ProductService(HybridCache cache){ public async Task<List<Product>> GetProductsByCategoryAsync(string category, CancellationToken cancellationToken = default) { string cacheKey = $"products:category:{category}"; // Use HybridCache to fetch data from either L1 or L2 return await cache.GetOrCreateAsync( cacheKey, async token => await FetchProductsFromDatabaseAsync(category, token), new HybridCacheEntryOptions { Expiration = TimeSpan.FromMinutes(30), // Shared expiration for L1 and L2 LocalCacheExpiration = TimeSpan.FromMinutes(5) // L1 expiration }, null, cancellationToken ); }}

How It Works

Cache Lookup:

- The method first checks if the cacheKey exists in HybridCache.

- If found, the cached data is returned (from L1 if available; otherwise, from L2).

Cache Miss:

- If the data is not present in both L1 and L2 caches, the delegate (FetchProductsFromDatabaseAsync) is invoked to fetch data from the database.

Caching the Data:

- Once the data is retrieved, it is stored in both L1 and L2 caches with the specified expiration policies.

Response:

- The method returns the list of products, either from the cache or after fetching from the database.

Note: null value stands for Tags (continue to read).

How to remove data from cache?

To remove items from the HybridCache, you can use the RemoveAsync method, which removes the specified key from both L1 (memory) and L2 (distributed) caches.

Here's how you can do it:

public async Task RemoveProductsByCategoryFromCacheAsync(string category, CancellationToken cancellationToken = default){ string cacheKey = $"products:category:{category}"; // Remove the cache entry from both L1 and L2 await cache.RemoveAsync(cacheKey, cancellationToken);}

Effect: The entry is removed from both L1 and L2 caches. If the key doesn't exist, the operation has no effect.

Future: Tag-Based Invalidation with HybridCache

Adding Entries with Tags

When storing entries in the cache, you can assign tags to group them logically. For example, you can assign the same tag ("category:electronics") to all product entries in the "Electronics" category.

public async Task AddProductsToCacheAsync(List<Product> products, string category, CancellationToken cancellationToken = default){ string cacheKey = $"products:category:{category}"; await _cache.SetAsync( cacheKey, products, new HybridCacheEntryOptions { Expiration = TimeSpan.FromMinutes(30), // Set expiration LocalCacheExpiration = TimeSpan.FromMinutes(5), // L1 expiration Tags = new List<string> { $"category:{category}" } // Add tag }, cancellationToken );}

Removing Entries by Tag

To remove all cache entries associated with a specific tag (e.g., "category:electronics").

Here, categoryTag could be "category:electronics". This will remove all cache entries tagged with "category:electronics".

public async Task InvalidateCacheByTagAsync(string categoryTag, CancellationToken cancellationToken = default){ // Use the tag to remove all associated cache entries await _cache.RemoveByTagAsync(categoryTag, cancellationToken);}

Limitations

Preview Feature: As of now, the implementation of tag-based invalidation in HybridCache is still in progress, and it may not work fully in preview versions of .NET 9.

Fallback: If tag-based invalidation is not available in your setup, you'll need to manually track and remove entries by key.

Comparison with .NET 8

To create caching including InMemory caching and Distributed caching from the example above, you would need to write the following code in .NET 8:

public async Task<List<Product>> GetProductsByCategoryAsync(string category){ string cacheKey = $"products:category:{category}"; // L1 Cache Check if (_memoryCache.TryGetValue(cacheKey, out List<Product> products)) { return products; } // L2 Cache Check var cachedData = await _redisCache.GetStringAsync(cacheKey); if (!string.IsNullOrEmpty(cachedData)) { products = JsonSerializer.Deserialize<List<Product>>(cachedData); // Populate L1 Cache _memoryCache.Set(cacheKey, products, TimeSpan.FromMinutes(5)); return products; } // If not found in caches, fetch from database products = await FetchProductsFromDatabaseAsync(category); // Cache in both L1 and L2 _memoryCache.Set(cacheKey, products, TimeSpan.FromMinutes(5)); await _redisCache.SetStringAsync( cacheKey, JsonSerializer.Serialize(products), new DistributedCacheEntryOptions { AbsoluteExpirationRelativeToNow = TimeSpan.FromMinutes(30) } ); return products;}

Conclusion

In the .NET 9 Hybrid Cache version:

- The code is simpler and easier to maintain.

- Serialization is abstracted away.

- L1 and L2 synchronization is automatic, reducing complexity.

- Expiration policies are centralized and applied uniformly across layers.

Advantages

Performance Optimization:

- Frequently accessed data is served quickly from the L1 cache.

- Data consistency across distributed instances is ensured via the L2 cache.

Automatic Synchronization:

- HybridCache handles the synchronization between L1 and L2 caches, reducing developer overhead.

Centralized Expiration Management:

- You can control cache lifetimes at both L1 and L2 levels with a single configuration.

Graceful Degradation: If the L1 cache expires, the L2 cache ensures the data is still available without querying the database.

This makes .NET 9 Hybrid Cache ideal for caching heavy, serialized data like lists of products in distributed API applications.

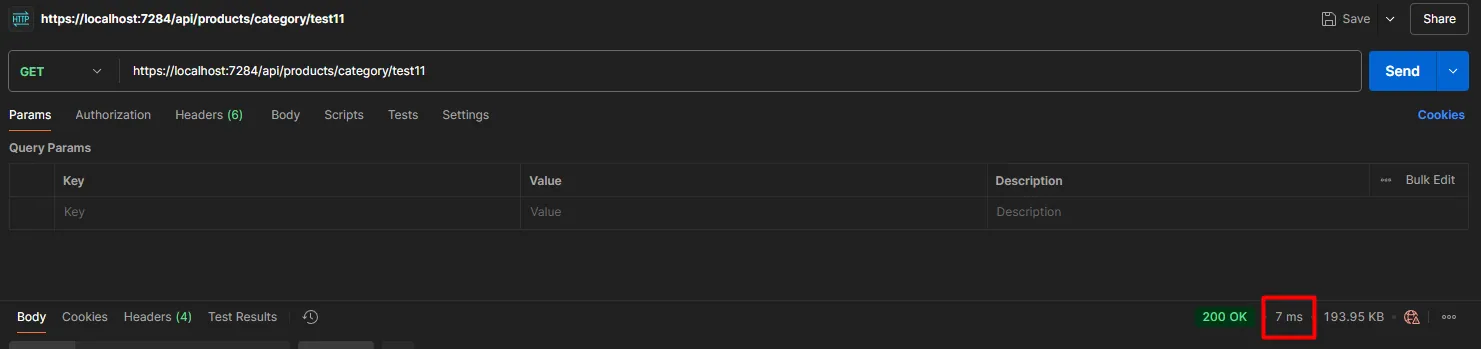

Performance comparison

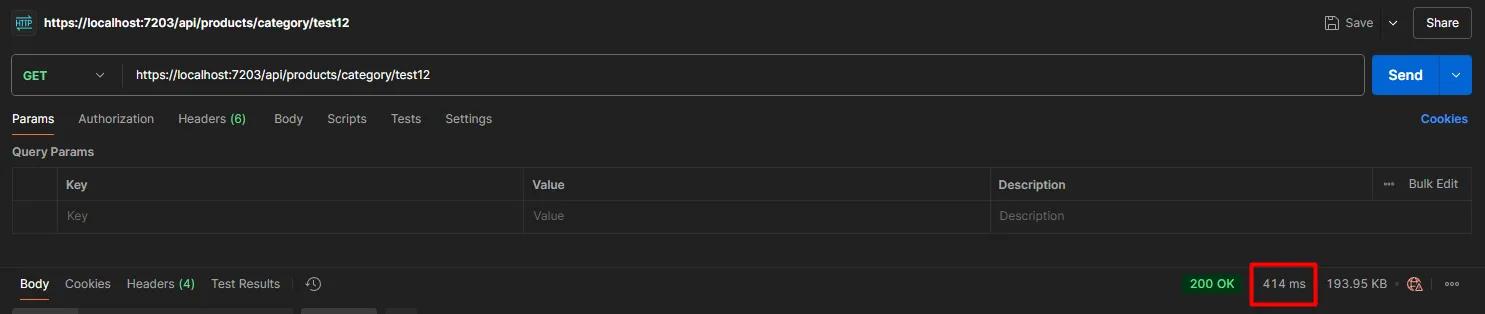

Given that this feature is still in prerelease mode, and not complete, it probably doesn't make much sense to compare performance, but I did it purely out of curiosity.

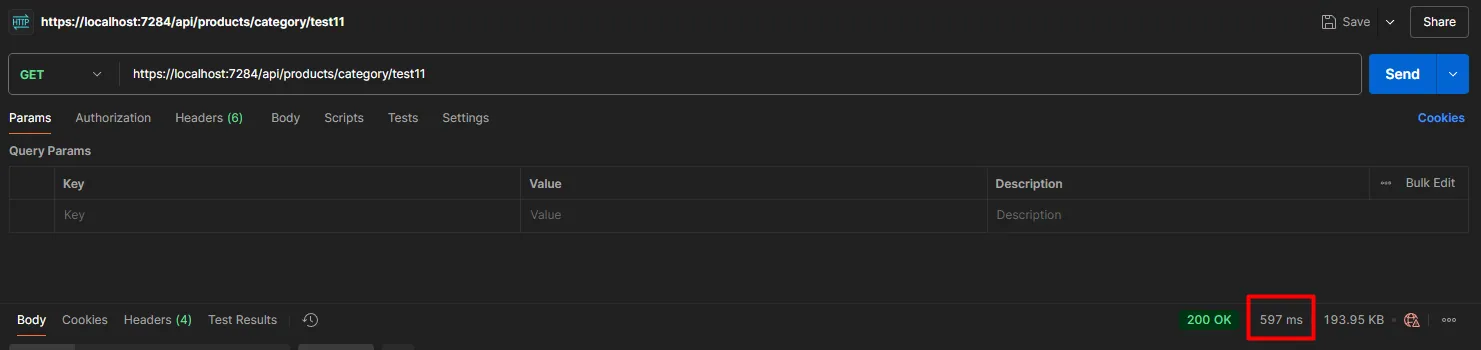

.NET 8 - Cache is not populated

.NET 8 - Returning values from the cache

.NET 8 - Returning values from the cache

.NET 9 - Cache is not populated

.NET 9 - Cache is not populated

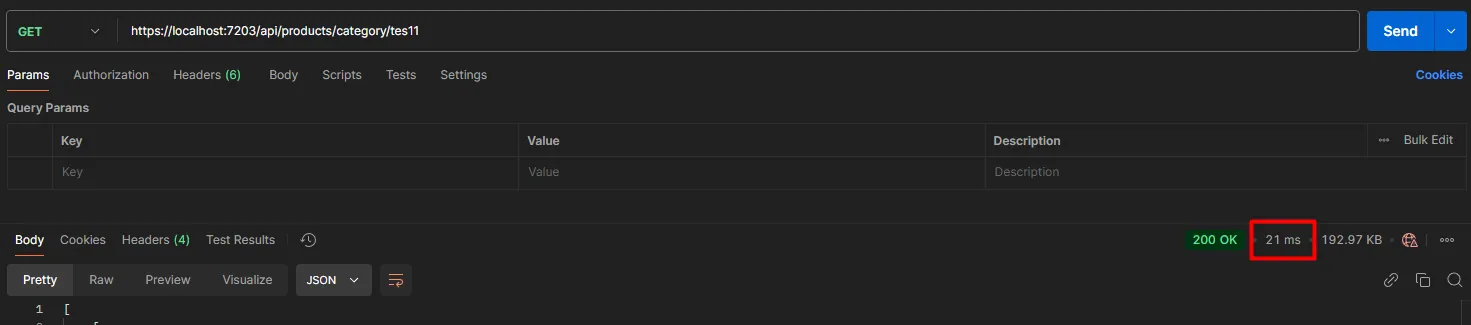

.NET 9 - Returning values from the cache

.NET 9 - Returning values from the cache

The difference I can notice here is that when adding values (1000 products) to the cache for the first time, it is faster with .NET 8 by some 100ms.

But extracting the value from the cache is more performant with .NET 9.

Certainly, we will see soon how this will progress.

Wrapping Up

The .NET 9 Hybrid Cache is a significant leap forward in simplifying and optimizing caching strategies for modern .NET applications.

By seamlessly combining the speed of in-memory caching (L1) with the scalability and consistency of distributed caching (L2), Hybrid Cache provides developers with a powerful and flexible tool to enhance application performance while maintaining data consistency across distributed systems.

Although currently in preview, features like tag-based invalidation will further streamline cache management. As the ecosystem evolves, Hybrid Cache is poised to become the default caching solution for performance-focused, scalable .NET applications.

For foundational caching concepts, see Memory Caching in .NET. If you need advanced distributed caching with Redis, check out Dual-Key Redis Caching in .NET.

Frequently Asked Questions

What is HybridCache in .NET 9?

HybridCache is a new caching API in ASP.NET Core (.NET 9) that combines in-memory (L1) and distributed (L2) caching into a single, unified interface. It automatically synchronizes between the two layers, handles serialization, and provides stampede protection out of the box. You configure it with AddHybridCache() in your DI setup.

How is HybridCache different from IMemoryCache and IDistributedCache?

With the traditional approach, you manage IMemoryCache (L1) and IDistributedCache (L2) separately — writing manual synchronization logic, handling serialization yourself, and duplicating expiration policies. HybridCache wraps both layers into one API: GetOrCreateAsync checks L1 first, falls back to L2, and if both miss, calls your factory method and populates both caches automatically.

Does HybridCache support Redis?

Yes. HybridCache uses IDistributedCache as its L2 backend. You can plug in any distributed cache provider, including Redis, SQL Server, or NCache. Just register your Redis distributed cache (AddStackExchangeRedisCache) alongside AddHybridCache(), and HybridCache will use it as the L2 layer.

What is stampede protection in HybridCache?

Stampede protection prevents multiple concurrent requests from hitting your database when a cache entry expires. Without it, if 100 requests arrive simultaneously for the same expired key, all 100 would query the database. HybridCache ensures only one request fetches the data while the others wait for the result.

That's all from me for today.

dream BIG!

About the Author

Stefan Djokic is a Microsoft MVP and senior .NET engineer with extensive experience designing enterprise-grade systems and teaching architectural best practices.

There are 3 ways I can help you:

Pragmatic .NET Code Rules Course

Stop arguing about code style. In this course you get a production-proven setup with analyzers, CI quality gates, and architecture tests — the exact system I use in real projects. Join here.

Not sure yet? Grab the free Starter Kit — a drop-in setup with the essentials from Module 01.

Design Patterns Ebooks

Design Patterns that Deliver — Solve real problems with 5 battle-tested patterns (Builder, Decorator, Strategy, Adapter, Mediator) using practical, real-world examples. Trusted by 650+ developers.

Just getting started? Design Patterns Simplified covers 10 essential patterns in a beginner-friendly, 30-page guide for just $9.95.

Join 20,000+ subscribers

Every Monday morning, I share 1 actionable tip on C#, .NET & Architecture that you can use right away. Join here.

Join 20,000+ subscribers who mass-improve their .NET skills with actionable tips on C#, Software Architecture & Best Practices.